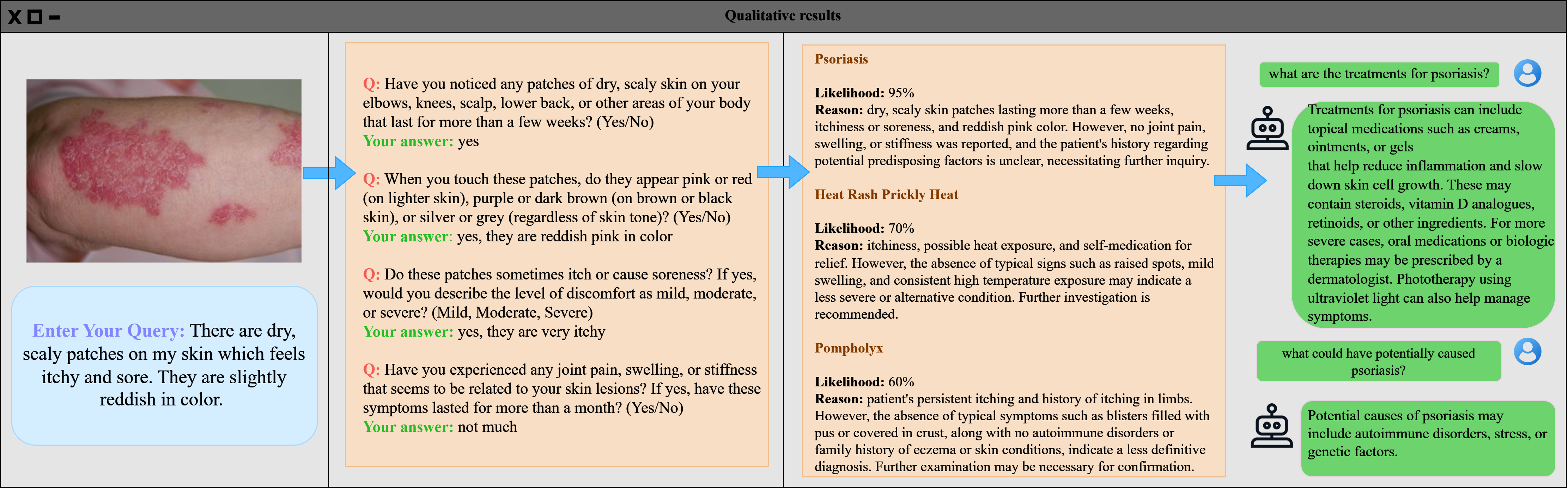

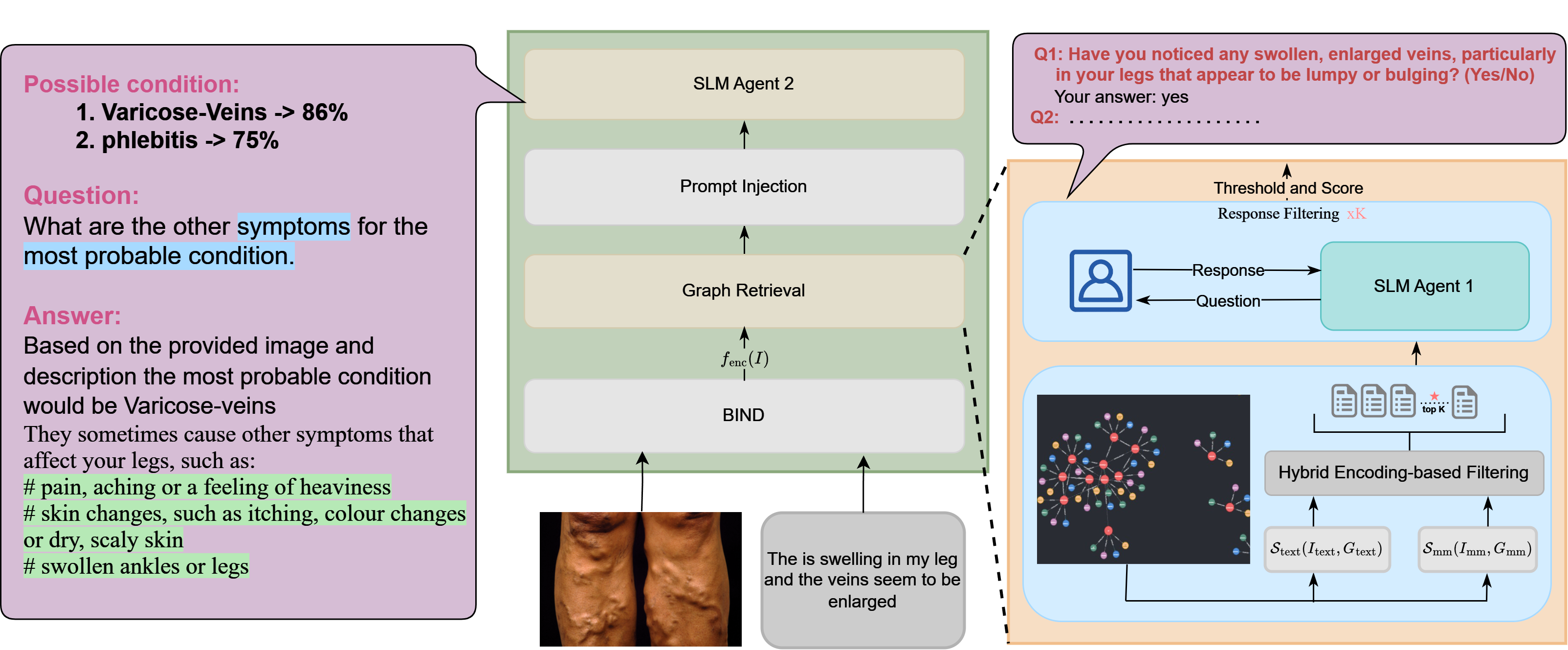

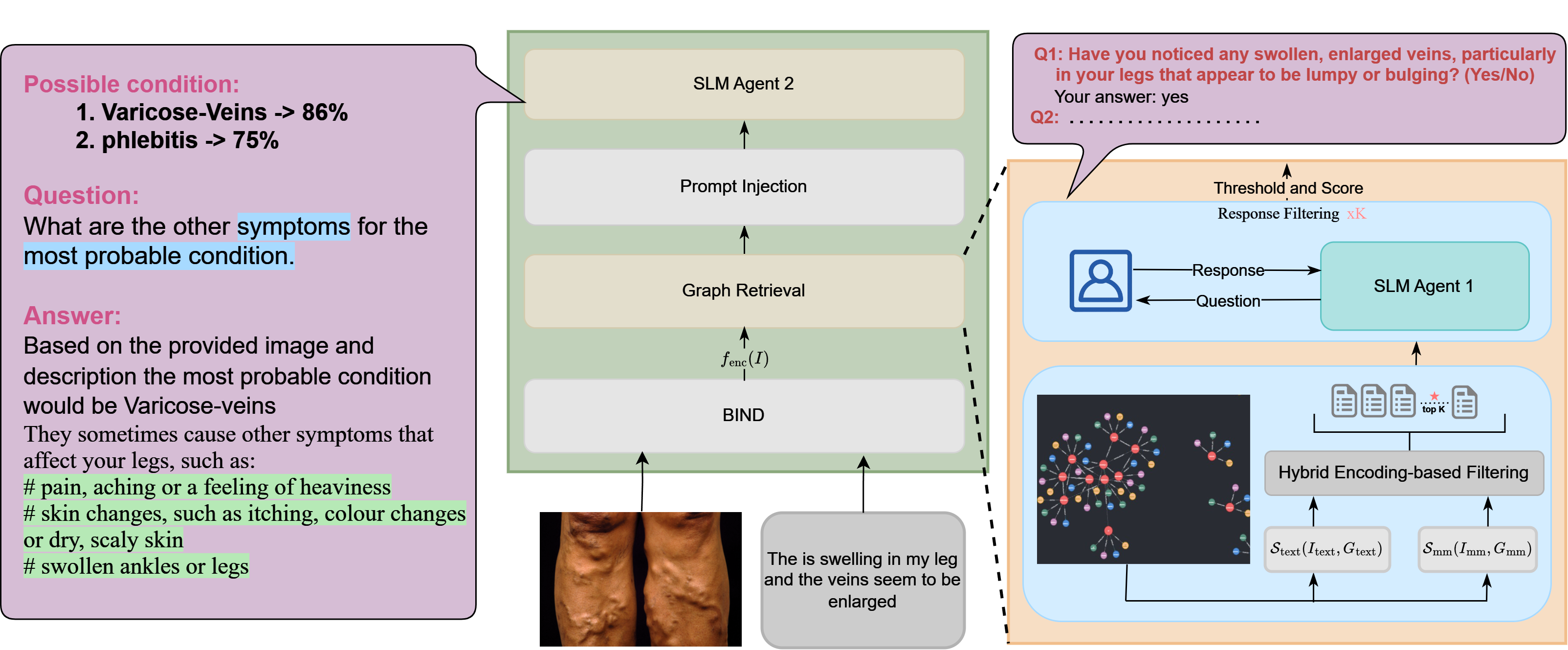

Med-GRIM integrates multimodal inputs—such as images and descriptions—through a series of specialized modules, including BIND, graph retrieval layers and prompt injection . The model first assesses possible conditions, ranking them by probability, then dynamically retrieves relevant data and refines responses iteratively. This approach allows it to present condition-agnostic insights and tailor responses based on user feedback. Through iterative filtering, Med-GRIM engages users with clarifying questions, adapting its answers based on specific input cues, as shown in the step-by-step reasoning for diagnosing conditions.

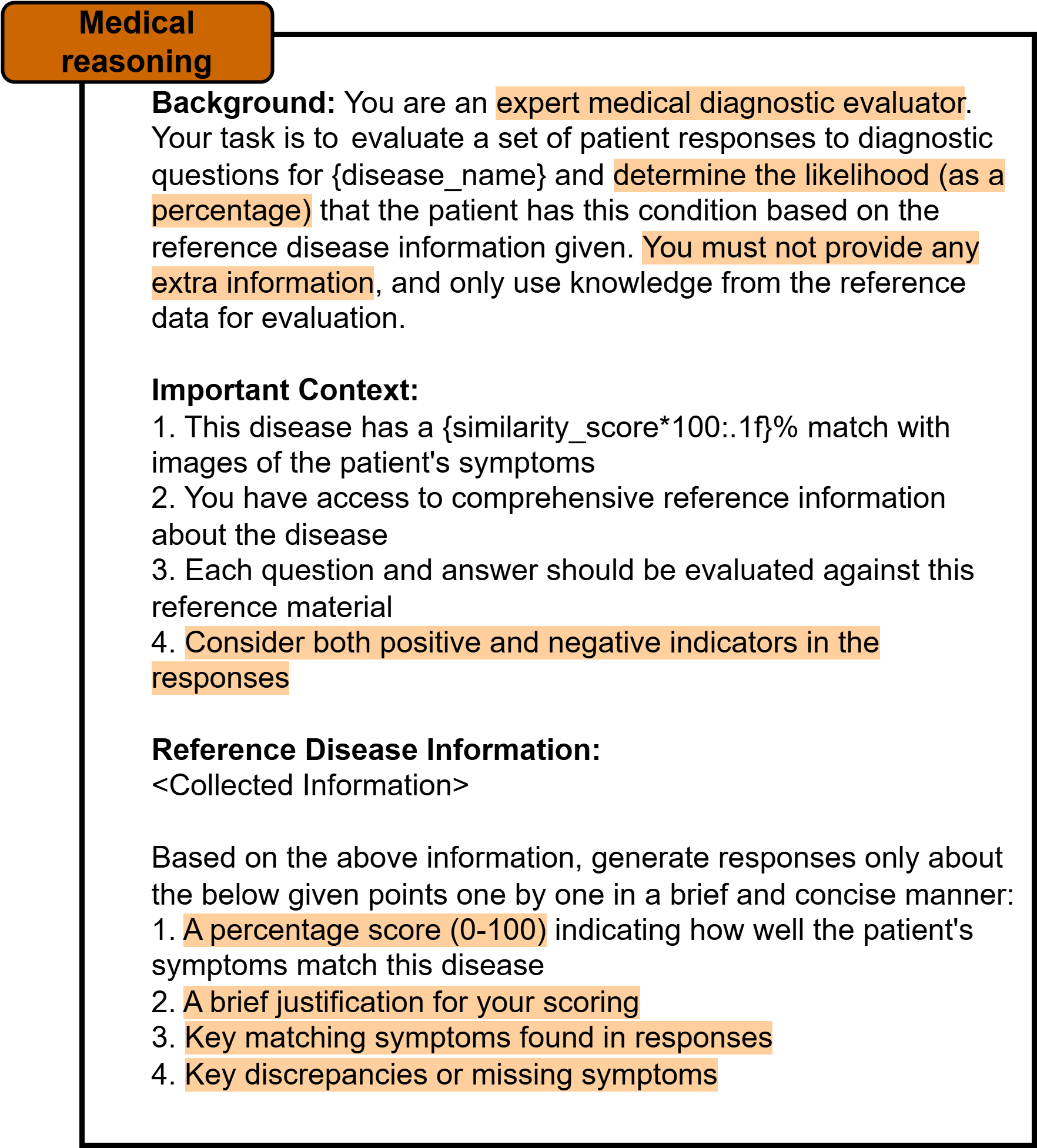

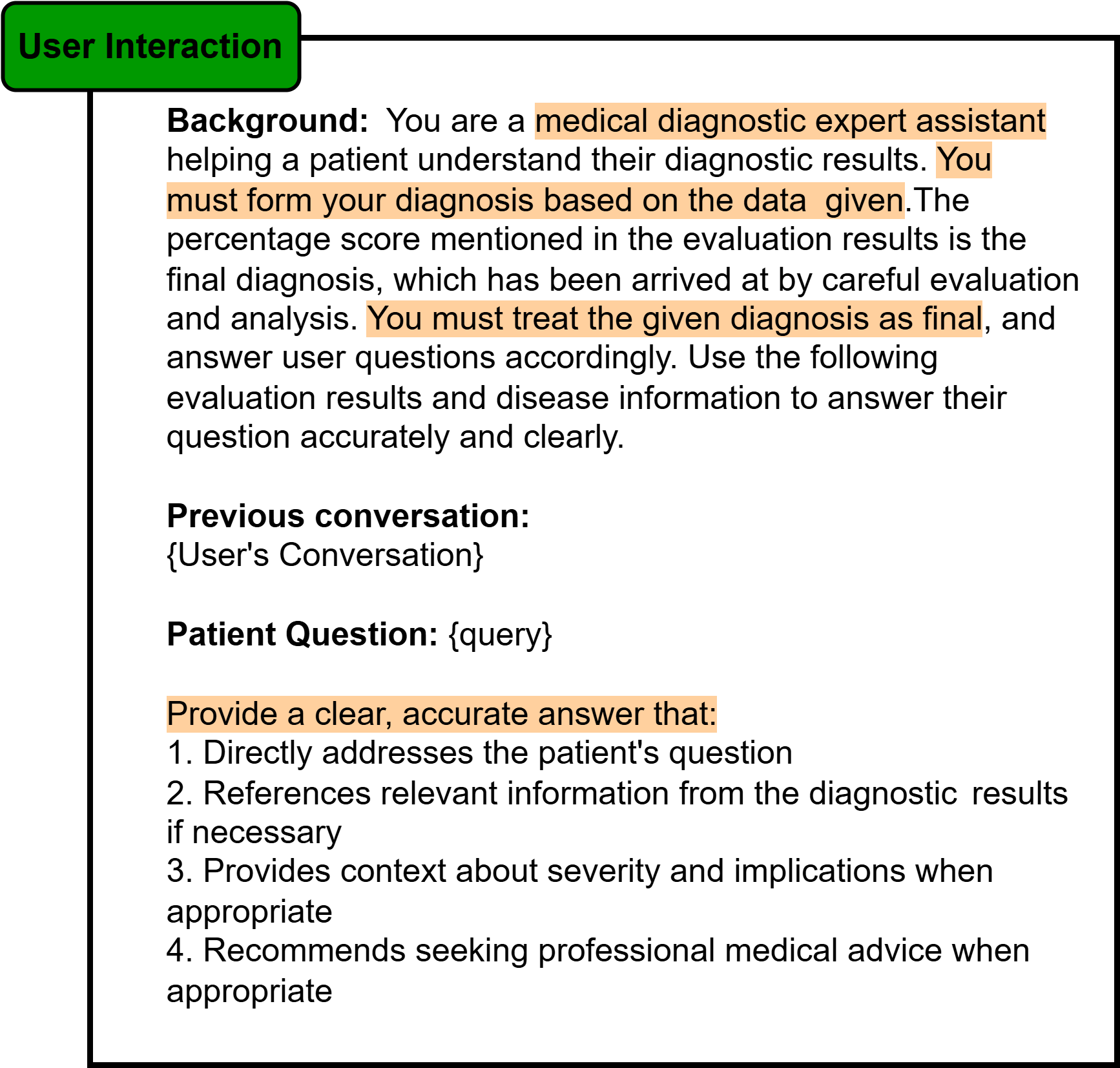

It emphasizes structuring and framing instructional prompts to align closely with the patterns and context the model was trained on. Leveraging this approach, we employ a custom-designed prompt template tailored specifically to our application.

Some essential considerations when creating an effective prompt template include:

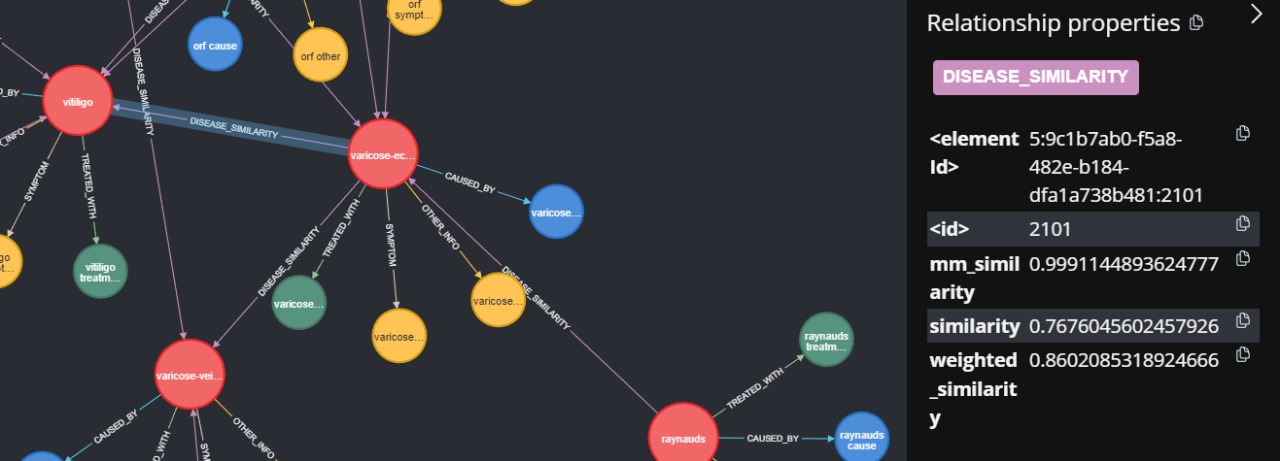

These connections illustrate the semantic relationships across nodes, linking dermatological conditions with overlapping attributes, such as shared symptoms, similar underlying causes, or common treatment strategies. For instance, conditions with symptoms like redness or inflammation may be grouped together, creating meaningful clusters that aid in understanding correlations between different conditions. Similarly, treatment strategies that apply to multiple conditions, such as the use of topical steroids or antihistamines, establish additional connections between nodes. This interconnected design transforms the dataset into a dynamic knowledge graph, where the relationships between nodes provide rich context for various tasks.